For decades, training measurement in Learning and Development has focused on activity. How many employees logged in? How many courses were completed? How many certificates were issued?

These metrics were easy to collect, report, and scale. In a world where learning content was scarce and expensive to produce, they made sense, but today we know that those metrics are not enough to show value.

But that world has changed.

Artificial intelligence has dramatically reduced the time and effort required to create learning content. What once took weeks can now be done in minutes. Courses, simulations, guides, and microlearning modules can be produced faster than organizations can consume them. When content becomes abundant, activity ceases to be a meaningful signal of progress.

And yet, most learning programs still rely on training measurement that does not show whether learning leads to meaningful behavior change or business value. According to CIPD research, 30% of business leaders reviewing HR and L&D metrics cite that the figures don’t give them the full picture, and 22% say it’s not clear how the data connects to organizational priorities.

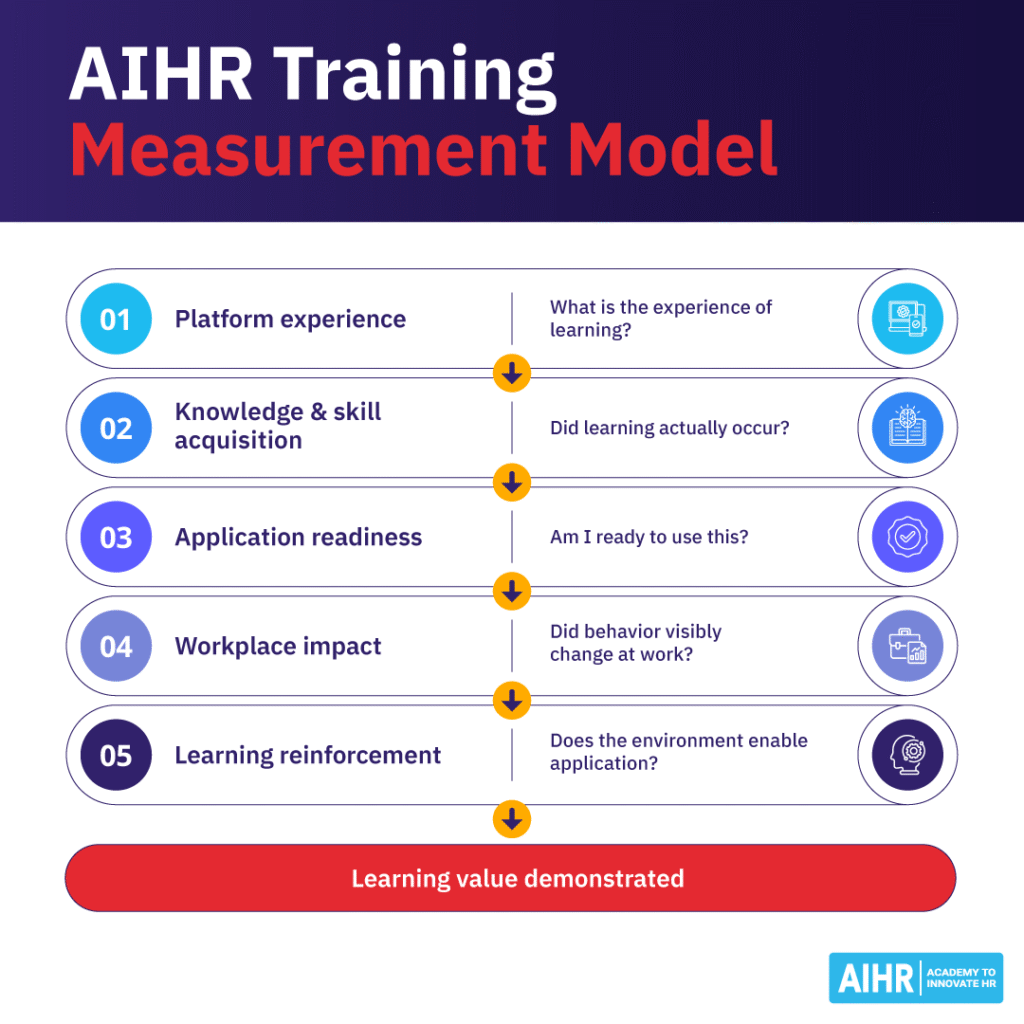

In this article, we explore the learning value measurement model adopted at AIHR. The model evaluates learning value from multiple stakeholder perspectives and builds on traditional frameworks, such as Bloom’s Taxonomy, Kirkpatrick, and the Phillips ROI model, to measure learning value in self-paced online learning environments.

Contents

Why traditional training measurement falls short

A 5-layer training measurement model for measuring learning value

Why AIHR focuses on learning value rather than learning impact

Why traditional training measurement falls short

Most L&D teams are highly effective at training measurement when it comes to learning activity and consumption. Someone watches a video. Someone completes a module. Someone rates the course four stars. Over time, we have built sophisticated systems to capture all of this, and many dashboards are filled with these metrics.

But consumption is not proof of value.

Traditional learning metrics such as completions, clicks, and satisfaction scores can show participation, but they say little about actual capability building.

At AIHR, we define learning value as the extent to which learning builds capability, leads to meaningful behavior change, supports the original purpose of the learning intervention, and aligns with business outcomes. This is why training effectiveness should be evaluated through a broader lens than course completion or content engagement alone.

The real question is then not whether someone clicked through a module, but whether they do their job differently because of it.

A helpful way to think about modern training measurement is through three levels:

- Activity reflects what people consume.

- Learning reflects what they understand.

- Value reflects what they actually do differently, and the impact those changes create in the business.

This distinction is not new. Bloom’s taxonomy already separated knowledge acquisition from higher-order outcomes such as application and synthesis. The Kirkpatrick model, with its four levels of reaction, learning, behavior, and results, has shaped L&D evaluation for more than 60 years, while the Phillips ROI model extended this logic by linking learning outcomes to financial impact.

These models laid the foundation for many of today’s learning and development KPIs, even if they do not fully reflect the realities of modern digital learning.

Many of the models, I think, are limiting because they overcomplicate what it is that we really need to do to show that what we’re investing in is really working. Simplicity can really make a difference for folks who are struggling with measurement. So what metrics should we be looking at? It’s not really about the metrics because I think that might put us in a box of trying to standardize. Not surprisingly, I am not a proponent of standardization across organizations. I do think you can create some standard procedures within an organization, and as long as everybody is bought in and understands why these systems and procedures matter, and they follow them, then it will work.

I do believe it’s important to be able to benchmark or baseline, or to compare apples to apples. And so the way that we would do that is you could create something like an alignment score. How well is our program aligning with what matters to the business? And then you can have an alignment score for every single initiative that your team is accountable for, which allows you to compare apples to apples. In our alignment score, we would see that this particular program is clearly aligned with solving a business problem or achieving a business goal, whereas in this other initiative, the clarity is simply not there.

A 5-layer training measurement model for measuring learning value

AIHR’s training measurement model builds on the foundations of the established training effectiveness models but updates them for the realities of modern digital learning and more effective training measurement. It introduces application readiness as a leading indicator, separates platform experience from learning outcomes, and grounds each layer in contemporary behavioral science, while explicitly recognizing the different stakeholders involved in creating and measuring learning value.

Equally important is starting with the business problem that the learning is intended to address. Learning should never exist as an isolated activity or as content looking for an audience. It should be designed to address a specific capability gap that prevents the organization from executing its strategy or improving performance.

This starting point shapes how learning value is defined and measured. When the underlying business challenge is clear, it becomes easier to determine which capabilities need to change, what behaviors should look different, and how success can be observed in the workplace. Measurement then moves beyond participation metrics to assess whether the learning intervention actually helped address the original problem it was designed to solve.

AIHR’s training measurement model comprises five connected layers that help L&D teams assess learning value more credibly:

- Layer 1. Platform Experience: “What is the experience of learning?”

- Layer 2. Knowledge and Skill Acquisition: “Did learning actually occur?”

- Layer 3. Application Readiness: “Am I ready to use this?”

- Layer 4. Workplace Impact: “Did behavior visibly change at work?”

- Layer 5. Learning Reinforcement: “Does the work environment enable application?”

The first four layers track the pathway from learning experience to workplace behavior change. The fifth captures the organizational conditions that influence whether learning is applied and sustained over time.

Good platform + Knowledge + Readiness + Environment

Platform Experience

Learners disengage before value is created and capability never builds

Platform + Knowledge + Readiness + Environment

Knowledge and Skill Acquisition

Learners enjoy the experience, but nothing sticks – pleasant experience with little value

Platform + Knowledge + Environment

Application Readiness

Skills are gained but never deployed and embedded – the knowing versus doing gap

Platform + Knowledge + Readiness

Workplace Impact Measurement

Change happens invisibly – value exists but cannot be demonstrated

Platform + Knowledge + Readiness + Impact

Learning Environment

Motivated learners with the skills return to the workplace ready, but the environment extinguishes new behaviors and learning evaporates

Let’s look at each of the five layers in more detail.

Layer 1. Platform Experience: “What is the experience of learning?”

Learning value starts with the learner experience. Two factors matter most. The first is ease of use. The platform should be intuitive and frictionless so that learners can focus on the content rather than the technology. The second is expectation fit. The learning experience should deliver the level of interactivity, structure, and practical application opportunities that learners expect.

At this level, engagement-based learning metrics still play an important role. Completion rates, interaction data, and usability feedback help determine whether the learning experience is accessible and easy to understand. These are useful early indicators, but they are not enough on their own to assess training effectiveness. A strong platform experience does not create learning value on its own, but a poor one can quickly undermine it by preventing learners from engaging with the material effectively.

From a stakeholder perspective, these metrics are primarily used by our product and content teams. They help evaluate whether the platform and learning materials meet the expectations they were designed to fulfill.

Layer 2. Knowledge and Skill Acquisition: “Did learning actually occur?”

Once learners can access and understand the material, the next question is whether they can actually use it. At this stage, learning moves beyond simple recall. For many teams, this is where a common KPI for training stops, even though skill acquisition alone does not guarantee workplace application.

Learners apply concepts to realistic situations and explore different ways of solving problems. Assessments, therefore, move beyond knowledge checks and focus on scenario-based challenges that test whether learners can recognize when and how to apply what they have learned.

This stage confirms that employees have gained new knowledge or skills. However, it does not guarantee that these capabilities will translate into workplace impact. Research by Pfeffer and Sutton on the “knowing-doing gap” shows that performing well in an assessment environment and applying knowledge in real work situations are fundamentally different cognitive tasks. Assessment designs that do not account for this distinction tend to overestimate learning transfer.

Our own data reinforces this insight. In other words, effective training measurement should assess not just whether someone knows something, but whether they are ready to use it.

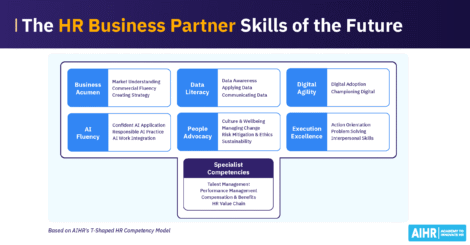

Effective application requires not only competence but also confidence. Measuring this level helps our subject-matter expert teams evaluate whether our content and learning experience are successfully building the skills we promise as part of our T-shaped competency model.

Layer 3. Application Readiness: “Am I ready to use this?”

This is one of the most overlooked layers in learning measurement and one of the most predictive. Between knowing and doing sits a critical question: Does the learner feel ready to apply what they learned in the real world?

Application readiness captures the psychological bridge between learning and behavior change. It measures three things:

- Whether learners see the relevance of what they’ve learned to their actual role,

- Whether they feel confident enough to apply it, and

- Whether they intend to apply.

Before applying new skills at work, employees typically ask themselves a few practical questions: Is this relevant to my role? Do I feel confident trying this? When would I actually use it?

This stage is grounded in well-established behavioral science. Bandura’s self-efficacy theory shows that confidence in one’s ability to perform a behavior is one of the strongest predictors of whether that behavior will occur. Similarly, Ajzen’s Theory of Planned Behavior identifies intention as the most reliable predictor of future action.

For L&D leaders, readiness metrics provide something extremely valuable: predictability, making them one of the most useful indicators of training effectiveness. If learners complete a course without confidence or a clear intention to apply what they’ve learned, meaningful behavior change is unlikely, no matter how strong the content itself is.

This metric is used internally by your teams and by our clients to evaluate whether they believe their teams will be able to apply the learning back at work. This is often measured at the end of the learning journey to allow corrective action before learners return to the workplace.

Layer 4. Workplace Impact: “Did behavior visibly change at work?”

Learning value becomes visible when behavior changes on the job through observable shifts in how people work. This layer is also where talent development metrics become more meaningful because they can be tied to behavior, performance, and business contribution.

Unlike application readiness, which captures whether learners feel prepared to act, workplace impact captures whether that change is actually visible on the job.

Employees solve problems differently, design new approaches, and contribute more effectively to organizational goals. Managers notice changes. Teams adopt improved workflows. Decisions become better informed.

It is also the most difficult layer to measure because it requires moving beyond the LMS and into the work itself. Yet this is precisely where learning proves its value. Most L&D functions stop short of measuring behavioral change, which is why those that do achieve a fundamentally different level of organizational credibility. Research by Watershed shows that organizations recognized as strong learning organizations are twice as likely to use performance improvement as their primary measure of learning success.

At this level, incorporating data from 360-degree feedback, performance reviews, and other performance-related indicators becomes critical to evaluating training effectiveness in practice. These sources help identify whether the intended behavioral changes are actually occurring in practice.

…answering the simple question, did our investment do what it said it was going to do? Did we accomplish what we invested in this program in the first place? So did we accomplish what we set out to accomplish?

At AIHR, this layer often sits outside the direct scope of our programs. However, we support our clients in measuring these outcomes and in connecting observed performance changes back to the learning interventions that enabled them.

Layer 5. Learning Reinforcement: “Does the work environment enable application?”

The final layer looks beyond the learning intervention itself. Learning does not happen in isolation, and behavior change rarely depends on training alone. Whether new skills are applied and sustained depends heavily on the work environment around the learner.

Learning takes place in organizations shaped by competing priorities, inconsistent management, misaligned incentives, and cognitive overload. Baldwin and Ford’s influential 1988 transfer-of-training model identified three factors that determine whether learning transfers: training design, trainee characteristics, and, critically, the work environment. Decades of research have consistently confirmed that workplace conditions, particularly manager support and opportunities to apply new skills, are among the strongest predictors of whether learning translates into behavior change.

In practice, we focus on three factors that most consistently determine whether learning translates into value or quietly fades after the course ends.

- Incentive alignment: If the organization rewards the old behavior, the new one will not stick. Learning cannot overcome a compensation structure or performance management system that points in the opposite direction.

- Manager reinforcement: Managers are arguably the most powerful variable in learning transfer. A meta-analysis by Blume et al. found that supervisor support is one of the most consistent predictors of training transfer across studies—often stronger than the quality of the training itself. Gartner research reinforces this point: when managers actively embed new behaviors into day-to-day interactions with their teams, employee performance increases by up to 35%.

- Opportunity to apply: Sometimes employees genuinely want to apply what they have learned, but simply cannot. The tools are missing, processes prevent it, or the team culture discourages experimentation. These are not learning failures and often need to be resolved through organizational design, process reengineering, and the availability of tools.

Seeing the model in practice

Consider a company redefining the role of its HR Business Partners. Historically, HRBPs focused on operational support—handling employee issues, coordinating processes, and responding to requests. Leadership now expects them to act as strategic advisors, contributing to workforce planning, talent decisions, and organizational design. The challenge is not simply to train HRBPs, but to change how HR operates within the business.

Using AIHR’s training measurement model reshapes both the program and how its outcomes are evaluated.

- Layer 1: Platform experience. HRBPs engage with an intuitive learning platform designed around interactive, scenario-based exercises rather than passive content. Engagement data and usability feedback confirm that the learning experience is accessible and aligned with participants’ expectations.

- Layer 2: Knowledge and skill acquisition. Participants work through realistic business cases; interpreting workforce analytics, identifying talent risks, and recommending strategic actions. Scenario-based assessments confirm whether they can apply the concepts to real organizational challenges.

- Layer 3: Application readiness. After completing the program, HRBPs assess whether the new capabilities are relevant to their role, whether they feel confident applying them, and whether they intend to use them in upcoming conversations with business leaders. Where readiness is low, targeted coaching reinforces learning before the opportunity for impact is lost.

- Layer 4: Workplace impact. Several months later, the focus shifts to the workplace itself. Are HRBPs bringing workforce insights into planning discussions? Are they influencing talent decisions rather than simply responding to requests?

- Layer 5: Learning Reinforcement. The team also investigates whether HRBPs are included in strategic forums and whether their mandate has shifted to align with the new role expectations.

Why AIHR focuses on learning value rather than learning impact

A natural question about this model is why we refer to learning value rather than learning impact. The distinction matters because good training measurement should show contribution without overstating causality.

The reason is simple. True organizational impact is rarely the result of learning alone.

When outcomes such as productivity, revenue growth, or organizational performance improve, many factors are at play. Strategy shifts, leadership decisions, market conditions, technology, team dynamics, and organizational structures all play a role. In complex systems like organizations, isolating learning as the sole driver of impact is rarely realistic.

For this reason, AIHR’s model focuses on learning value instead, giving L&D leaders a more credible and practical framework for training measurement. Value captures the contribution learning makes along the pathway from capability development to behavioral change at work. It reflects whether learning builds skills, increases readiness to apply those skills, and leads to observable changes in how people perform their roles.

Impact may still occur, and organizations should absolutely track broader business outcomes. However, learning value allows L&D to measure what it can most credibly influence and demonstrate how learning contributes to improved performance without overclaiming causality in complex organizational systems.

How can we use learning analytics to tell the story of the value, and prove the value of learning through our learning analytics? And the answer is, you can’t. Our learning analytics, if you think of it as a story, learning analytics are just part of the story of what unfolds when people engage in learning initiatives. There are a lot of other data points we look at to understand the impact, or to prove, did your initiative do what it was intended to do?

Final words

AI has already changed how learning is created. The next step is for HR and L&D leaders to rethink training measurement so it reflects learning value rather than learning activity.

The real question is whether HR and L&D leaders will use this disruption to measure what matters finally.

Activity metrics once helped L&D scale and report participation, but they do not predict value. In a world where content is abundant and knowledge is everywhere, access to learning is no longer scarce. Capability is.