Training effectiveness evaluation is an important practice in data-driven HR, but often does not get the attention it deserves. This post outlines where many training effectiveness evaluations fall short, as well has how they can be improved, using unconscious bias training programs as an example.

Over the last few years, many organizations have recognized unconscious bias as a very real and persistent problem. Loosely defined as subconscious prejudices against groups that affect our behaviour without us even realising it, unconscious bias can lead to unfair discrimination in the workplace. Given that such discrimination often disadvantages groups like women and ethnic minorities, many companies have started offering training programs to help reduce the negative effects of unconscious bias.

These programs have become especially popular in the tech industry, with early adopters like Google and Facebook leading the charge. Despite all this excellent work and progress, though, an important question arises:

How can organizations make sure that their unconscious bias training programs are actually effective in reducing the negative effects of such bias?

Most organizations who have evaluated such programs only report increases in awareness/understanding or positive participant reactions. In other words, they report if participants:

a) liked the program

b) learned something

While these are important pieces of information to collect, they do not tell whether these programs actually reduce the influence of unconscious bias or not.1 This is by no means a problem unique to unconscious bias training programs either; this is a problem with training effectiveness evaluation overall, and unconscious bias programs are just a current example.

Related: How to leverage your employee net promoter scores

As HR analytics professionals, we always have to ensure that the data we are collecting speak to the questions we want answered. Gathering data on reactions and awareness may be convenient, but we need to expand our report cards for our training programs in order to help them improve. I could personally go online to Merlin’s Realm and undergo training to become a wizard. I may:

a) enjoy the program

b) learn some wizardly knowledge

but does that mean I will be able cast a spell to make myself fly afterwards? Sadly, the answer is no.

The Kirkpatrick model, seen below, highlights two additional, very important criteria for training effectiveness evaluation that many organizations miss:

The training effectiveness evaluation criteria I have described thus far fall under levels 1 (Reaction) and 2 (Learning). Reaction essentially describes the degree to which trainees find the program enjoyable and relevant, whereas learning describes the degree to which trainees gain knowledge, attitudes, and commitment based on the training. What about levels 3 (Behaviour) and 4 (Results) though?

Related: What is HR analytics?

Behaviour refers to how much trainees actually change their behaviour based on what they learned when back on the job. In an unconscious bias context, a relevant behaviour could be stopping oneself to think if bias might be at play while making a decision. While this is indeed a bit tougher to collect data on that levels 1 and 2, it’s far from impossible. You could:

a) present trainees with behaviours and ask how often they’ve done them in the past week

b) incorporate relevant behaviours into performance evaluations that managers conduct

Results refers to the degree to which specific outcomes occur as a result of the training. In an unconscious bias training program, it is what we’ve been looking for all along: reductions in the influence of unconscious bias. While it is difficult to establish that a given result occurred because of bias training directly, there are probably data you already collect that could point in the right direction. For example, you could look at:

a) differences in discrimination-related complaint numbers

b) the performance of diverse employees

By incorporating these two additional criteria, you can get much better insight into the effectiveness of your training programs. Another major question arises at this point, though: who should you compare training participants with in order to find those differences? Two strategies arise:

a) comparing the scores of employees who took the program to those who did not

b) comparing the scores of employees before the program to themselves after it

The major problem with strategy (a) is that even before going through the program, the employees who choose to participate in it may be fundamentally different from those who do not. For example, participants could be more motivated to reduce their unconscious bias than non-participants, and may already behave in ways that are accommodating to diverse individuals.

Related: Lean how to make an HR dashboard

Strategy (b) addresses the issue in (a) in that participants are compared to themselves, removing the influence of individual differences. It has its own problem, though, in that something besides the program could happen between the before and after program measures that changes the outcome, but is completely unrelated to the program. For example, in the middle of the program the CEO could send an email reaffirming the importance of respecting diverse individuals, leading to more employee awareness of such issues that is completely unrelated to the program.

While both (a) and (b) have their own flaws, they can each cover the limitations of each other. If you compare employees who participate in the program both to themselves before the program, and to similar employees who didn’t undergo the training, you can get the answers you’re looking for with a lot more certainty! A further recommendation is to collect the same training effectiveness evaluation data a few months after the training ends as a follow-up. This is because sometimes training effects degrade over time, and it’s nice to find out if they persist or not.

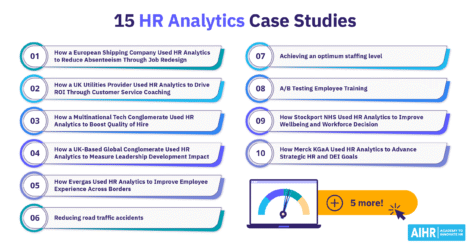

Related: 7 HR analytics case studies

In closing, I don’t want to minimize the great work that organizations like Google and Facebook have done, or give the impression that you always need to evaluate training on all four levels, before and after, and compared to another group. I honestly love all the energy in industry to reduce bias, and am more than aware that HR analytics professionals are limited in their time, resources, and access to data.

Instead, I simply want to challenge you to identify just a couple of the elements I’ve mentioned that are feasible for you to add, and incorporate them into your training effectiveness evaluation. By making our evaluations more rigorous bit by bit, we can improve our unconscious bias training programs, and help systematically reduce the influence of such biases in our workplaces. We’re all on the same team here, so let’s continue building up both our training programs, and each other!

1. A note for social science nerds: the conventional argument in favour using awareness as an evaluation criterion is that there is academic evidence that increasing awareness of stereotypes / unconscious bias can take away some of their power. several of these studies are summarized in kray and thompson (2004). while this definitely seems to be the case, a couple things should be noted: 1) “awareness” had very specific operationalizations in these studies that don’t completely match this context, 2) the contexts and samples of the studies do not match unconscious bias training very well, 3) many of the effect sizes are fairly small, and 4) several of these studies have experienced problems with replication. even if we assume these problems aren’t damning, though, there remains the fact that what works in one population or organization does not necessarily work in another organization, and it remains worthwhile to evaluate which programs are working, and which are not, in which contexts.↩