In brief

- Businesses face the ‘AI chasm,’ where enthusiasm for AI doesn’t translate into impactful organizational change.

- Most organizations are still experimenting without a clear value definition or prioritization of use cases.

- Five structural bottlenecks hinder progress: unclear metrics, lack of prioritization, capability gaps, unscaled skills, and weak strategic alignment.

- To cross the AI chasm, organizations must integrate AI into core operations, define measurable outcomes, and redesign workflows.

- HR leaders play a crucial role in bridging this gap by promoting skill development and ensuring AI is embedded in organizational processes.

Businesses face major gaps in AI adoption. 95% of generative AI pilots fail to deliver measurable impact despite unprecedented investments, with over 80% of AI projects failing. According to McKinsey, 78% of organizations use AI in at least one business function, yet only 6% generate meaningful returns.

Why do so few organizations get value from AI? In this article, we examine the gap between enthusiastic experimentation and organizational impact and provide recommendations for how organizations can bridge it and achieve AI that delivers a high return on investment (ROI).

The AI chasm

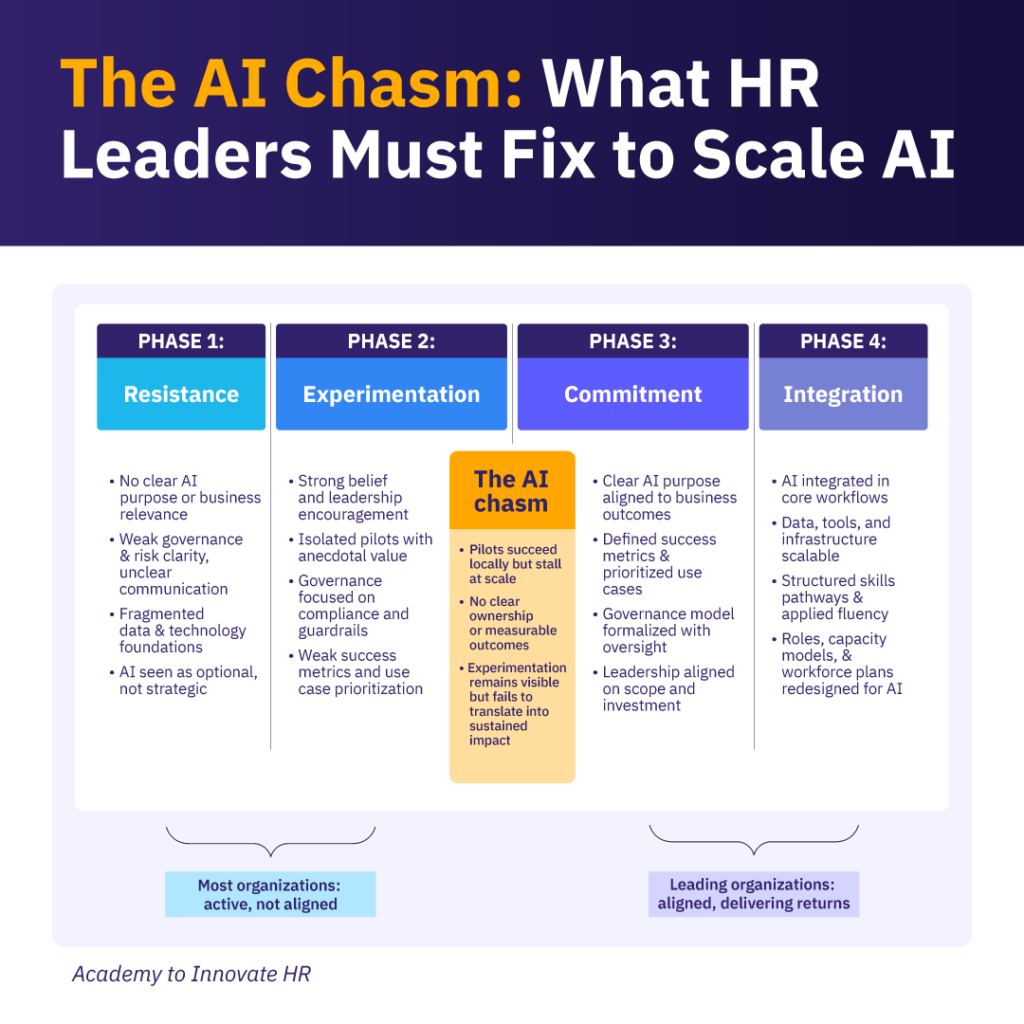

The AI chasm is the structural gap between experimentation and organizational commitment. It is the inflection point at which early pilots must move beyond isolated wins and become deliberate enterprise priorities.

Crossing this chasm has proven difficult, which is why we see widespread local AI experimentation that fails to scale at an enterprise level.

Our research across 300+ organizations confirms that. We define four phases of AI maturity: Resistance, Experimentation, Commitment, and Integration. The majority of organizations today remain in Phases 1 and 2, with only a small minority having crossed into Phase 3 or beyond.

The gap between Experimentation and Commitment is the AI Chasm, the moment at which organizations must choose: continue experimenting at the edges, or redesign how AI is embedded into strategy, governance, capability, and workforce planning.

For most organizations, this is not a failure of ambition. Rather, it is a failure of translation.

Inside the AI chasm: 5 structural bottlenecks

Most organizations we’ve interacted with are experimenting without a clearly defined purpose. They cannot confidently answer: Where exactly will AI create value for us? How will we measure it? Which use cases matter most? Why do we want an AI agent? Without answers to these questions, experimentation is unlikely to translate into impact or high-ROI AI.

To add to this, governance is often focused on setting guardrails rather than enablement. Infrastructure exists, but it is not scalable or integrated into the tech stack. And skills are developing in pockets, but not embedded across business lines. In short, organizations are active but are not yet aligned, lacking an overarching strategy.

Despite a strong leadership belief in AI, our data reveals five structural bottlenecks inside the chasm:

- Unclear value definition: Organizations lack clear AI success metrics, signaling limited clarity on how AI impact is measured.

- Lack of prioritization: Only 30% of organizations report a clearly defined purpose and prioritized AI use cases.

- Capability gap: Close to 70% of organizations do not feel they have the right data, tools, or infrastructure in place to leverage AI.

- Skills not scaled: Only 35% report having the skills and exposure needed to integrate AI into daily workflows.

- Lack of strategic alignment: Teams and departments are not aligned on what success looks like or where to focus, resulting in limited prioritization, failure to scale what works, and failure to eliminate what doesn’t.

These five bottlenecks do not exist in isolation. Maturity across strategy, governance, technology, people, and skills evolves together. Organizations that lack clarity on value often also lack scalable infrastructure and applied capability, while weak governance typically coincides with uneven skills and execution discipline.

Progress in one pillar rarely compensates for weakness in another. This is what makes the chasm difficult to cross: it is not a single bottleneck to fix, but a systemic shift that requires coordinated advancement across multiple dimensions at once.

Are you in the chasm? Diagnostic questions to answer

Before organizations can cross the chasm, they need to know where they stand. The following questions help identify whether your organization is still in experimentation, approaching the crossing, or actively making the shift:

- Can your leadership team name the top three AI use cases that are directly tied to measurable business outcomes, not just productivity experiments?

- Do you have a defined owner for each AI initiative, with clear accountability for results beyond the pilot phase

- Is AI capability development embedded in role expectations and learning pathways, or is it still voluntary and ad hoc?

- Has your governance structure shifted from risk prevention to responsible enablement, with clear decision rights and escalation paths?

- Are your AI initiatives included in strategic planning cycles and resource-allocation conversations at the business-unit level?

If most answers are ‘no’ or ‘not yet,’ your organization is inside the chasm. The good news: recognizing the gap is the first step to closing it. You can benchmark your organization’s current readiness across all five dimensions using AIHR’s AI Readiness Assessment.

What crossing the AI chasm actually looks like

Organizations that successfully cross the chasm share a set of behaviors that distinguish them from those that continue to stall. The difference between them is not their level of enthusiasm about AI, but the discipline they apply in execution.

They also redesign work, not just workflows. Where most organizations layer AI onto existing processes, organizations that cross the chasm ask a harder question: what does this role, team, or function look like when AI is a core input to how work gets done? The answer usually requires redesigning job responsibilities, decision rights, and performance expectations, not just providing access to new tools.

Finally, they formalize AI ownership at the business unit level. AI stops being an IT or innovation team initiative and becomes part of how each function plans, operates, and reports. This is the shift from AI as a project to AI as an operating model, and it is what separates isolated pilots from enterprise performance.

The cost of staying in the AI chasm

There is a tendency to treat prolonged experimentation as low risk; a cautious, responsible approach to a technology that is still evolving. The data suggests otherwise.

Organizations that remain in the chasm face compounding costs. Each quarter of fragmented AI activity widens the capability gap relative to competitors who are scaling successfully. Talent with AI fluency gravitates toward organizations where AI is embedded in how work is done, not just offered as an optional tool. And the longer AI remains an initiative rather than an operating model, the harder the cultural shift becomes: teams develop habits around workarounds, governance remains immature, and strategic leadership loses confidence in AI’s ROI.

The organizations generating meaningful returns from AI did not get there by experimenting more carefully. They got there by deciding to organize differently around AI, and then executing that decision with discipline across strategy, governance, technology, people, and skills.

How HR leaders can bridge the chasm

Crossing the chasm is not only a technology decision; it is an operating model shift that translates executive ambition into coordinated execution across roles, routines, and capabilities. Crossing the chasm requires clarifying ownership, prioritizing use cases, defining measurable outcomes, and redesigning work(flows) so AI expectations are embedded at the role level.

The shift demands defining the skills AI-enabled roles require, embedding learning into daily work, and ensuring fluency scales beyond early adopters. It requires governance that enables responsible use through clear decision rights and accountability structures. Organizations stall when AI remains an initiative; they progress when it becomes part of how work is structured, governed, and led.

Conclusion

Crossing the AI chasm is not about ambition; it’s about translating intention into strategic action. This requires a vision, disciplined choices about what to double down on and what to let go, and a commitment to what the future organization should look like.

The difference between those who scale and those who stall is not enthusiasm but discipline. Clear outcomes, prioritized use cases, aligned governance, scalable capability, and redesigned work models separate isolated pilots from enterprise performance.

AI will not transform organizations because it is adopted. It will transform organizations when it is strategically aligned with business outcomes and embedded into how work is designed, measured, and led. Crossing the chasm is not about doing more with AI. It is about organizing differently around it.