As generative AI becomes embedded in everyday workflows, the HR conversation around AI has intensified. The HR community debated which tools to adopt, which use cases create value, and how HR’s ways of working must evolve. At AIHR, we’ve also argued that AI fluency is a core capability for the HR professional of the future.

Yet beneath these discussions lies a more fundamental shift. AI is not only changing how HR works, but it is also changing how organizations make people decisions. And that’s where HR’s leadership and guidance are most needed. The real opportunity is not simply to deploy AI, but to elevate the quality, fairness, and integrity of our decision-making.

In this article, we explore how AI is reshaping people decisions, the four risks leaders must actively govern, and a practical lens to integrate AI in ways that can strengthen organizational trust.

Contents

How AI is changing the architecture of HR decision-making

4 risks to address before scaling AI in HR decisions

A practical framework for AI in HR decision-making

How AI is changing the architecture of HR decision-making

AI is influencing HR decision-making at multiple levels, from daily operational choices to strategic workforce planning.

For years, HR has been on a journey toward more evidence-based decision-making, from building analytics capabilities to strengthening reporting and investing in dashboards. Adoption of people analytics has increased by 60% over the past five years within HR functions, signalling the higher importance of data-driven decision-making. In addition, 74% of organizations report measurable improvements in workforce experiences through the use of people analytics.

Yet in many organizations, data still plays a supporting role. Insights often follow discussions rather than shape them, and metrics validate decisions more than they inform them.

AI changes that dynamic. Instead of reporting on what already happened, AI systems identify patterns across fragmented data sets and generate predictive insight. Leaders can move from retrospective reporting to forward-looking risk signals. From reviewing last year’s attrition to predicting which roles are most likely to turn over next quarter. Put simply, AI is changing how HR leaders evaluate evidence and make workforce decisions.

We discussed AI governance and the role of HR with John Rood, Founder of Proceptual, an AI compliance solution provider. Watch the full interview here:

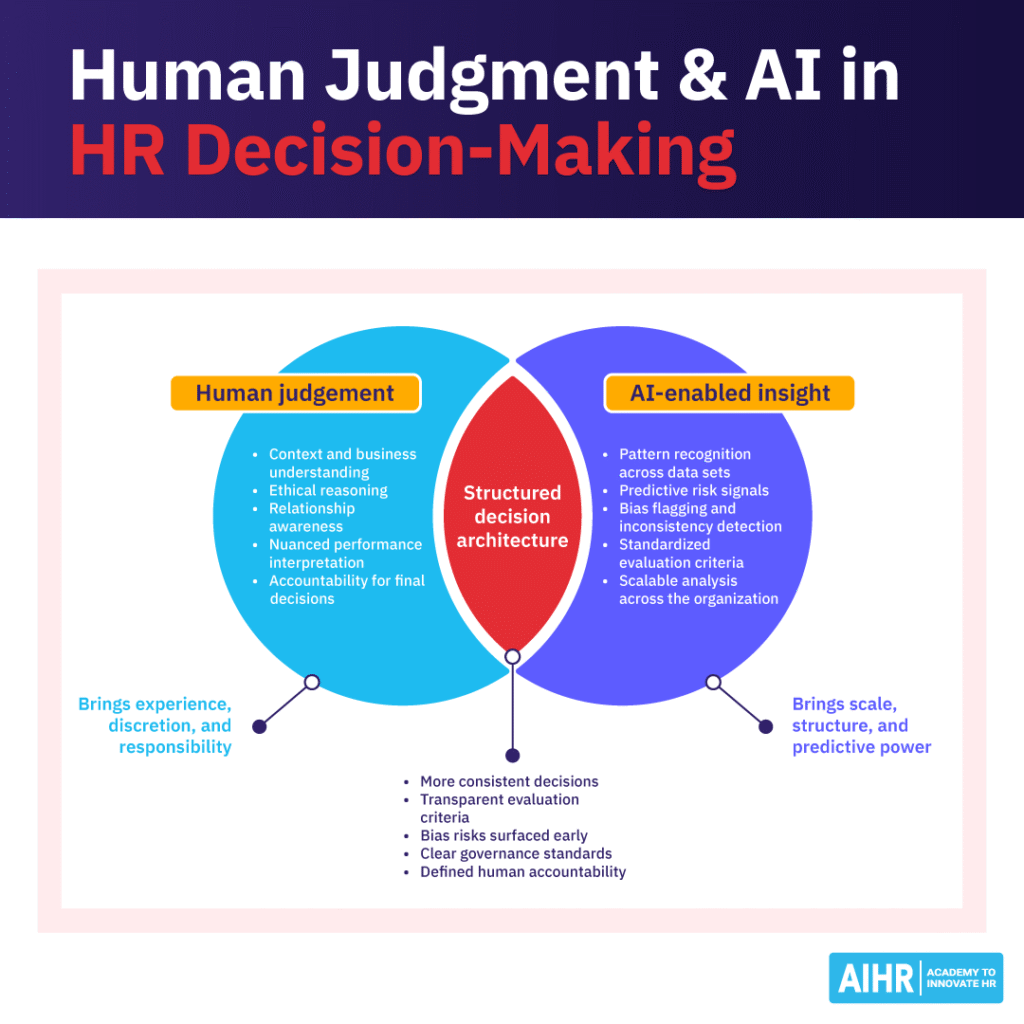

Balancing human judgment and AI in HR decisions

While human judgment has always shaped HR decisions, it has never been flawless. Decades of behavioral research show that managerial decisions are shaped by cognitive bias, incomplete information, and matters such as overconfidence. AI does not remove these dynamics, but it changes how organizations manage them. When well-designed and governed, AI can surface hidden patterns, highlight inconsistencies, and help reduce bias in decision-making.

Consider a promotion decision, one of the most consequential moments in an employee’s experience. Traditionally, a manager builds a case based on performance history, observed potential, and personal assessment. HR provides benchmarks or historical data, but the reasoning is explicit and human.

Now imagine that same decision supported by AI. The system surfaces patterns from prior promotions, highlights capability gaps associated with success, flags potential bias in historical ratings, and generates ranked recommendations. The manager’s judgment is no longer the sole driver. It is augmented, not ignored, but seen as one of the data points, not the overarching factor.

This also represents a subtle redistribution of authority. The parameters embedded in a model, the variables selected, the thresholds defined, and the outcomes optimized reflect design choices. And those choices shape decisions long before a manager enters the conversation.

The opportunity is significant: greater consistency, earlier insight, and a more structured basis for high-stakes decisions, while retaining human oversight and responsibility.

At the most fundamental level, AI ethics is about alignment. How do we align the AI systems we develop, buy, or use with what we want them to do, and think carefully about how our use of AI affects other people? That alignment question is the foundation. The next level up is a simplified model we call the three cornerstones: transparency, bias prevention or mitigation, and explainability.

Adapting the HR operating model for AI-enabled decisions

Adopting AI in HR decision-making also changes the expectations of roles such as the HR Business Partner. Historically, HRBPs created impact through advice, judgment, and influence in the room. In an AI-enabled environment, their value increasingly lies in:

- Scalable decision-making capability

- Knowing when to rely on data

- When to challenge system recommendations

- How to design decision processes that remain fair and accountable.

In other words, HR’s competitive advantage shifts from individual judgment to scalable decision architecture. Fairness, accountability, and discretion are no longer shaped only by human reasoning; systems shape them. And that is where leadership must pay attention.

As AI becomes embedded in HR processes, your HR Business Partners need the skills to guide responsible, fair, and evidence-based decisions across the business.

With AIHR’s HR Business Partner Boot Camp, your HRBPs will learn to:

✅ Translate AI-driven insights into clear, business-relevant recommendations

✅ Partner effectively with leaders on talent, workforce planning, and change

✅ Make decisions that ensure accountability and consistency.

🎯 Develop HRBPs who can lead high-quality decision-making in an AI-supported environment.

4 risks to address before scaling AI in HR decisions

As AI becomes embedded in people’s decisions, the risks extend beyond compliance or technical accuracy. They are leadership risks as they affect trust, culture, and credibility. And because AI rapidly scales decisions, it also scales their impact, or potential harm.

There are four risks to actively mitigate as part of scaling AI in people’s decision-making:

1. Opacity and explainability

AI systems often rely on logic that is not easily visible, explainable, or transparent. When employees cannot understand how a decision was shaped, trust weakens.

Example: An employee is declined for an internal role because their “fit score” falls below the threshold, yet neither the manager nor HR can clearly explain how the AI model calculated that score.

Mitigation: Ensure that all AI model outputs are explainable and that leaders using them can justify and explain how the model reached its conclusions.

2. Maintaining fairness at scale

AI can reduce bias, but it can also institutionalize historical patterns. Unlike human bias, which is inconsistent, system-level bias can be replicated with precision and speed.

Example: A screening model consistently filters out candidates from non-traditional career paths because past hiring data favored linear trajectories.

Mitigation: Audit training data and maintain a human-in-the-loop approach for high-risk and critical decision-making.

3. Dehumanization

As decisions become more data-driven, employees may experience processes as transactional rather than relational. Being assessed by a system feels different from being seen by a leader.

Example: Performance discussions focus on productivity dashboards, leaving little room for context, nuance, or individual circumstances.

Mitigation: Ensure that AI does not replace human interaction or accountability, but rather enhances the manager or employee’s ability to improve the quality of the interaction.

4. Diffused accountability

When AI informs decisions, responsibility can blur. Was it the manager’s call, or the system’s recommendation? Ambiguity undermines credibility. There is also a quieter risk: automation bias. When a system appears data-driven and precise, leaders may defer to it too quickly — mistaking algorithmic output for objective truth.

Example: A leader justifies a compensation decision by saying, “That’s what the system recommended,” without owning the outcome.

Mitigation: Establish clear human accountability for AI-generated outputs and decisions. The human being remains accountable for the decision made.

There’s a significant regulatory push in this direction. We do a lot of work with the EU AI Act regulation; we have a number of laws here in the United States as well. Global organizations really have to start thinking, with all of these measures going into effect over the next two years, what is the strategy for getting out ahead of that compliance hurdle?

None of these risks is an argument against AI. They are reminders that technology reshapes power, perception, and responsibility. They are also increasingly visible to regulators and courts, as AI-informed employment decisions come under greater scrutiny.

However, how do we translate these principles into a framework that leaders can practically adopt in their organizations daily?

A practical framework for AI in HR decision-making

Before adopting or scaling AI in any people process, leaders should apply a disciplined set of questions that helps guide them on how AI could or should be applied. These questions act as decision gates, and AI must pass each hurdle before we decide to proceed.

Enhancing judgement

Does this strengthen human judgment rather than replace it?

- Humans retain meaningful oversight

- Leaders can override the system

- AI augments insight and discernment

- Decision-making is fully automated

- Human review is symbolic or absent

- Critical thinking is removed

Clear accountability

Is there a clearly accountable human decision owner?

- A named leader owns the decision

- The owner can explain and defend outcomes

- Responsibility is explicit

- Accountability is vague or diffused

- No one stands behind the outcome

Fair consequences

Have we actively assessed and mitigated unintended harm?

- Bias and impact testing conducted

- Vulnerable groups considered

- Safeguards are in place

- Fairness assumed, not tested

- No disparate impact analysis

- Edge cases ignored

Explainability

Could we clearly explain this decision to those affected?

- Logic can be explained in plain language

- Employees would understand the influence

- Leaders are comfortable explaining it publicly

- Explanation is unclear or overly technical

- Hesitation to communicate openly

Maintaining trust

Does this strengthen trust, dignity, and fairness?

- Aligns with desired employee experience

- Builds confidence in leadership

- Reinforces organizational values

- Creates fear or distrust

- Conflicts with stated culture

AI in HR decision-making framework in action: A Singaporean business using AI in the performance management process

When the executive team proposed replacing annual performance reviews with an AI-driven continuous scoring system, the ambition was to reduce bias, increase objectivity, and make talent decisions more objective.

But when HR pressure-tested the idea through a few simple decision gates, some concerns were evident.

Decision gate 1: Enhancing judgment

Would this strengthen managerial judgment, or replace it? Having AI assign and process incentives based on the scoring risked the technology becoming the decision-maker, rather than the decision support.

Decision gate 2: Clear accountability

Who, precisely, owned the final call? The algorithm? The manager? The executive team? The lack of a clearly accountable human decision-maker exposed a governance gap.

Decision gate 3: Fair consequences

Early analysis showed potential unintended harm, particularly for lower-visibility roles and employees returning from leave.

Decision gate 4: Explainability

Could leaders explain, in plain language, how scores were generated and how they influenced outcomes? If the answer required technical defensiveness, the system wasn’t ready.

Decision gate 5: Maintaining trust

Would employees experience this as fair and dignified? Trust depends as much on perception as precision.

Based on these decision gates, the team redesigned the process to augment annual reviews rather than replace them with AI. AI helped employees prepare, enabled managers to check decisions for bias, and allowed executives to analyze trends to improve calibration over time.

Final words

AI will continue to evolve. Models will improve. Adoption will accelerate. But the defining question for HR will remain unchanged: Who safeguards the integrity of people decisions?

HR’s responsibility is not to master every algorithm, but to set the standards that guide decisions, embedding fairness, accountability, and employee experience into the systems that shape workforce outcomes.